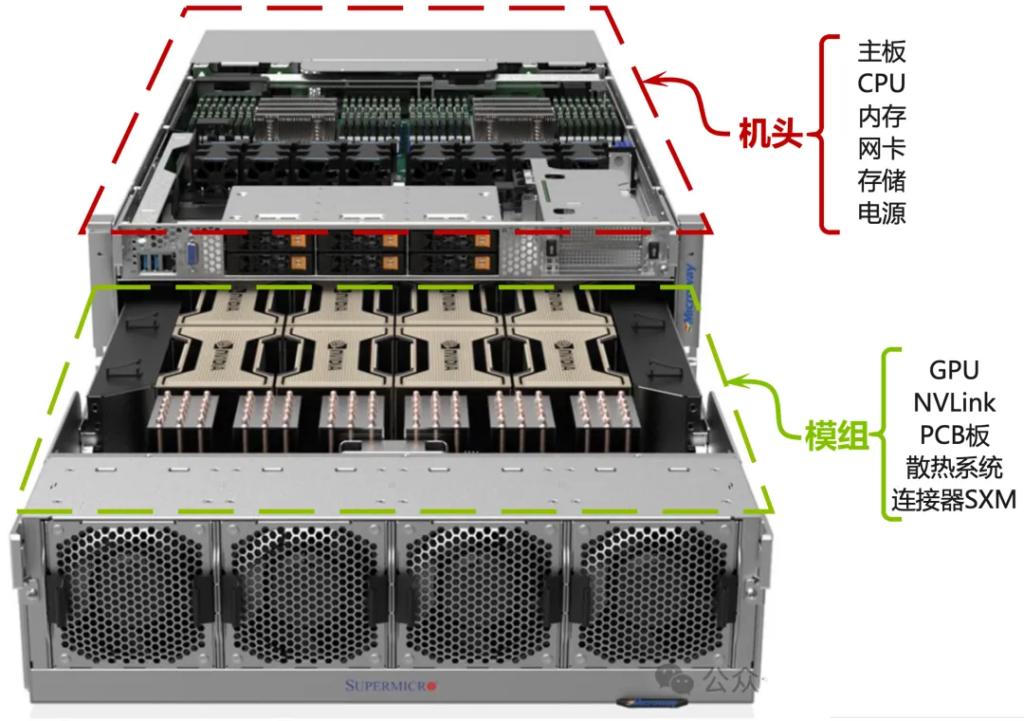

1)Definition: The head refers to the core compute and system-control section of a server that does not contain the GPUs. You can think of it as a “super motherboard without GPUs”. It handles overall scheduling, management, networking and base compute tasks.

2)Composition:

- CPU:Typically two high-performance server-class CPUs such as Intel Xeon or AMD EPYC.

- Motherboard: The main PCB hosting CPUs, memory, and expansion slots.

- System memory: Large amounts of DDR4/DDR5 memory dedicated to CPUs.

- Network interfaces: High-speed NICs (multiple 100Gb/200Gb/400Gb InfiniBand or Ethernet) for inter-server high-speed interconnect.

- System management controller (BMC): For remote monitoring and management.

- Storage interfaces: Controllers and connectors for NVMe or SATA/SAS drives.

- Power modules: Power supplies providing power to the head and connected modules.

二、The Module (GPU Module)

1)Definition: A module is a pluggable unit that carries GPUs and their high-speed interconnect components. A single head can attach to multiple modules, allowing high GPU density and ease of maintenance/upgrades.

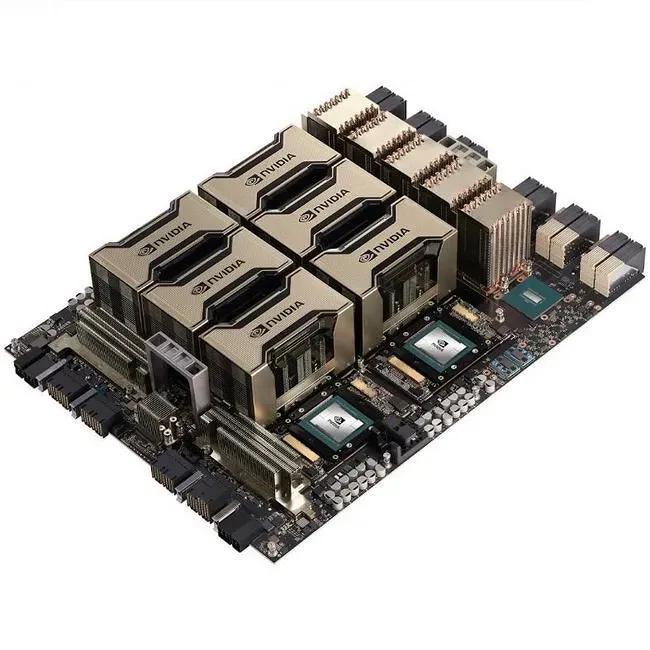

2)Compsition:

- GPU: The module core, typically hosting 4 or 4 high-performance GPUs (NVIDIA A100, H100, H200, etc).

- GPU interconnect: The module’s core capability – often NVLink/NVSwitch chips that provide GPU-to-GPU connectivity far exceeding PCIe bandwidth.

- PCB: The board that mounts GPU and NVSwitches.

- Cooling system: High-capacity cooling for GPUs and switch chips – large heatsinks with fans or integrated liquid-cooling plates.

- Connectors: High-speed connectors (e.g., SXM) to link module to head backplane for power, dat and control signals.

三、Relationship: Head and Modules

Think of the GPU server as a factory.

- The head is the central office and logistics control (CPU=brain, network=phone lines, memory=documents).

- Modules are production workshops (GPUs are robotic lines; NVLink are internal conveyor belts).

- The head issues work orders and coordinates with other servers; within each module, GPUs collaborate via NVLink to complete heavy computational tasks.

四、Additional Parts of a Complete GPU Server

Beyond the head and modules, a full GPU server typically includes:

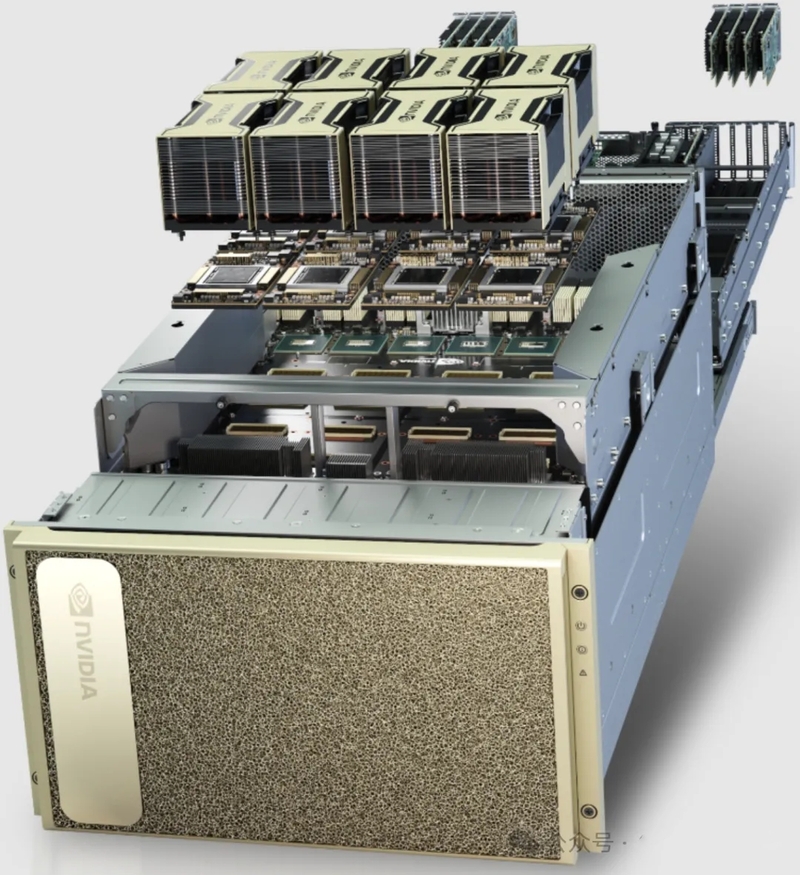

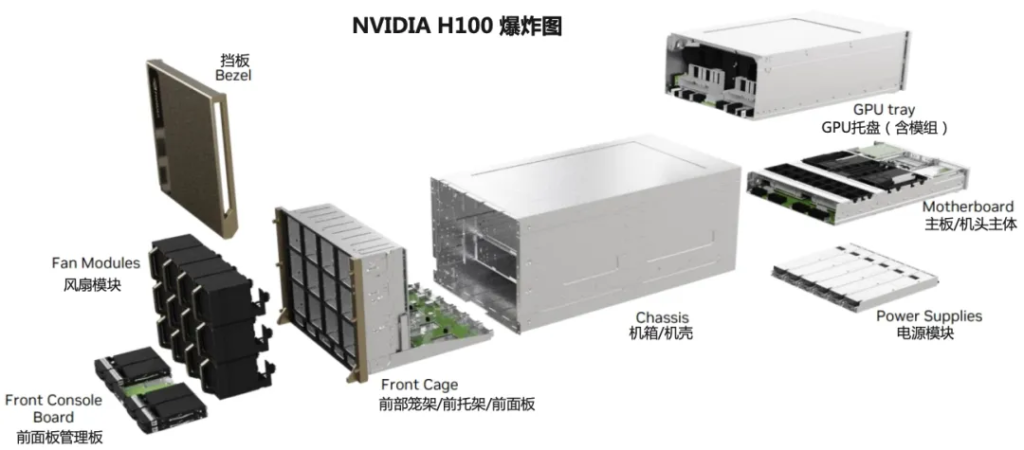

1、Chassis and Backplane

- Structure: A rugged metal enclosure and internal backplane.

- Role: The backplane is the central hub connecting head, modules, power and storage, providing high-power distribution and high-speed signaling. The chassis provides mechanical support and airflow channels.

2、Cooling System

Air cooling: Mutiple high-power fans with dedicated airflow channels. Liquid cooling: Increasingly used in high-density, high-power servers, via cold-plate or immersion cooling.

3、Power Delivery System

4、Storage System

- Multiple NVMe SSDs for fast local I/O so GPUs don’t starve waiting for training data reads/writes.

5、High-speed Networking

- Specialized NICs (e.g., NVIDIA ConnectX) supporting InfiniBand/RoCE for low-latency, high-bandwidth distributed training across servers.

6、Rack Rails and Cabling

- Standard rail kits and power/network cabling for rack installation.