Address

304 North Cardinal St.

Dorchester Center, MA 02124

Work Hours

Monday to Friday: 7AM - 7PM

Weekend: 10AM - 5PM

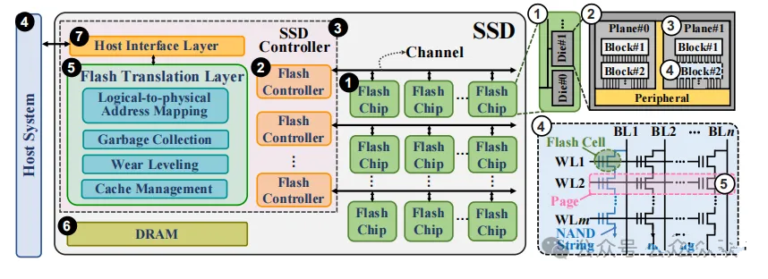

A common SSD architecture is illustrated by the diagram below: it show the SSD controller, DRAM, NAND chips, and how the flash controller interacts wit NAND architecture. It also briefly explians hierarchical NAND concepts such as die, plane, block, page, and cell. This figure represents the classic SSD architecture.

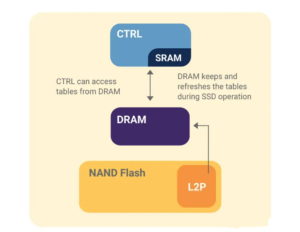

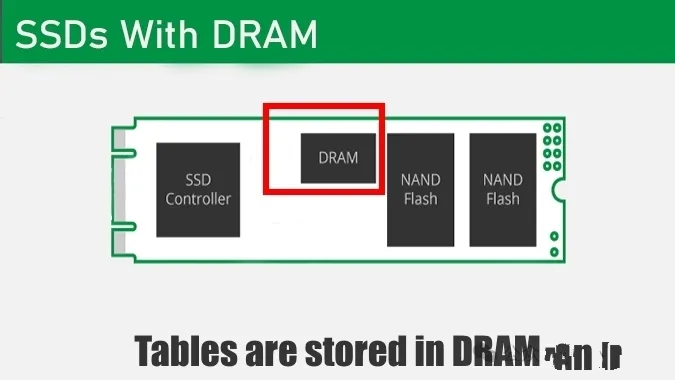

Modren solid-state drives (SSDs) commonly include a certain amount of DRAM as a cache. Its primary function is to store the FTL (Flash Translation Layer) mapping table and other temporary data, accelerating the SSD’s data processing efficiency.

The relationship between DRAM cahce capacity and NAND flash capacity is not fixed, but generally, larger-capacity SSDs require larger DRAM to maintain efficient FTL management. Reasons include:

Mapping complexity increases. As NAND capacity grows, the FTL mapping table becomes larger and more pages/blocks require management, necessitating additional cache space for these mappings.

Pefromance requirements. Large SSDs often target high-performance applications; higher read/write rates demand quicker cache responses. Sufficient DRAM ensures that under high concurrent workloads the FTL tables need not be repeatedly reloaded, avoiding performance bottlenecks.

Cost vs. value. Although DRAM cost is significant, vendors provision DRAM according to product positioning. Histrical heuristics (for example, 1MB DRAM per 1GB NAND) are not strict rules; different SKUs may adopt different ratios.

In traditional SSDs, DRAM plays essential roles such as storing metadata, buffering write data, coalescing small writes into larger ones, and assisting internal data movement for garbage collection. Because NAND behaves differently from spinning media, DRAM helps bridge these differences and optimize overall throughput.

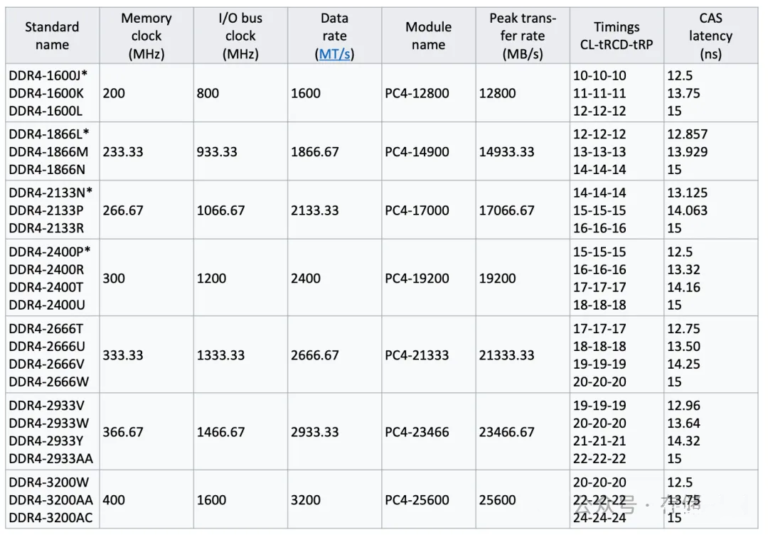

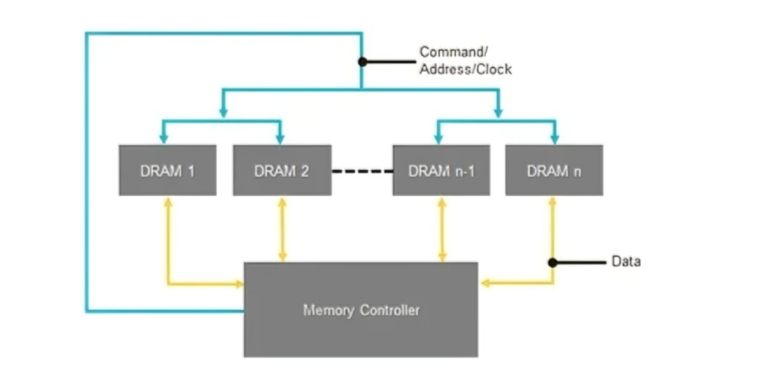

SSD DRAM typically operates at high data rates (e.g., DDR4 1600-3200Mbps or higher) and commonly uses a JEDEC-defined fly-by topology (clock/command lines daisy-chained across multiple DRAM devices). After power-up, theree core problems must be resolved by training:

NAND flash chips are the core medium by which SSDs store data, equivalent

The core role of the cache module is to temporarily store frequently

The interface is responsible for connecting to the host and transmitting

After covering hardware and software architecture, we now break down the SSD’s “input/output channels”: the front-end protocol, which communicates with the host, and back-end control, which communicates with NAND flash. These two parts directly determine SSD transfer speed, compatibility, and expandability, and are key criteria for enterprise selection.

The front-end protocol is the communication standard between the SSD and the host. It defines data transfer format, command, and bandwidth. Mainstream protocols are SATA and NVMe, and their differences are significant – they directly determine the performance ceiling of an SSD and are one of the key disinctions between consumer SSDs and enterprise SSDs.

SATA (Serial ATA) is the traditional storage protocol, originally designed for HDDs and later adpter for SSDs. It includes SATA1.0(1.5Gbps), SATA2.0 (3Gbps), and SATA3.0 (6Gbps).

The current mainstream version is SATA3.0, with a maximum bandwidth of about 600MB/s (actual transfer speeed about 500-550 MB/s). Its core characteristics are: extremely strong compatibility, supporting all devices with SATA interfaces (PCs, servers, laptops) and allowing direct replacement of HDDs without hardware of software changes; low performance ceiling, because protocol bandwidth limits sequential read/write speed to about 550MB/s and random read/write speed to about 100-200MB/s, making it unsuitable for enterprise high-frequency write/read workloads such as databases and virtulization; and low cost, because SATA SSD controllers and interfaces are inexpensive, making them suitable for entry-level comsumer use and large-capacity backup scenarios.

NVMe (Non-Volatile Memory Express) is a protocol designed specifically for SSDs. It is based on PCIe and avoids SATA’s performance limits.

Current mainstream vesions are NVMe 1.4 and NVMe 2.0, and maximum transfer bandwidth depends on the PCIe version (PCIe3.0 x4 about 32Gbps, PCIe4.0 x4 about 64Gbps, PCIe5.0 x4 about 128Gbps). Its core characteristics, especially important for enterprise use, are: extremely high performance, with sequential read/write speeds of 3,000-10,000MB/s and random read/write speeds of 1,000k-2,000k IOPS, 5-10 times that of SATA SSDs, making it suitable for high-frequency, low-latency workloads such as databases, virtualization, and AI training; very low latency, because NVMe simplifies the data transfer path and can reduce latency to 10-50 microseconds, far lower than SATA SSDs (100-200 microseconds); support for multiple queues, allowing concurrent processing of many read/write requests (for example, 64K queue depth), improving multi-task performance; and higher cost, becuase NVMe SSD controllers and interfaces are more expensive, with prices typically 1.5-2 times those of same-capacity SATA SSDs, making them suitable for enterprise core workloads.

A note:NVMe is further divided into NVMe over PCIe (local use) and NVMe over Fabrics (NVMe-oF, remote use). The latter is used in data centers for remote SSD sharing and high-speed access, similar in logic to NFS sharing but with much higher performance.

TRIM is a “cooperative command” between the host and the SSD. Its core purpose is to notify the SSD which data is invalid. When the host deletes a file, it does not directly delete physical data from the SSD; instead, it uses the TRIM command to tell the SSD that the data corresponding to a logical address is now invalid. FTL then marks the corresponding physical page as invalid and prepares it for garbage collection.

The core value is that after TRIM is enabled, SSD garbage collection efficiency improves significantly, because less data needs to be migrated during cleanup, reducing performance fluctuations while also extending SSD lifespand. Enterprise SSDs should enable TRIM (supported by both Windows and Linux).

Back-end control is the communication mechanism between the controller and NAND flash. It converts FTL commands (read, write, erase) into electrical signals that NAND flash can understand, while also implementing parallel control across multiple channels and chips to improve data transfer efficiency – essentially the SSD’s internal bus.

Core components and logic include: the flash controller (integrated in the controller), which drives NAND flash chips to perform read/write/erase operations and supports NAND interface standards such as ONFI and Toggle (mainstream is ONFI 4.0); multi-channel parallelism, where the controller uses multiple channels (such as 8 or 16 channels) to control multiple NAND chips simultaneously, with each channel connecting to multiple dies to enable parallel reads and writes – this is the core means by which SSD throughput is increased (for example, an 8-channel SSD can read and write eight chips simultaneously, making it eight times the speed of a single-channel design); and bad block management, where the back-end control continuously detects NAND blocks, marks failed bad blocks as unusable to prevent data loss, and uses reserved over-provisioned space to replace them, ensuring normal SSD operation.

The degree of back-end parallelism (number of channels and chips) directly determines maximum SSD throughput. Enterprise high-performance SSDs usually adopt 16-channel or 32-channel designs; the higher the parallelism, the stronger the performance.

Form factor is the SSD’s physical size and determines where the SSD can be installled (PCs, servers, laptops). Different scenarios require different form factors, which is also an important selection factor in enterprise environments. Common SSD form factors can be divided into four types, with the first three being the most important.

Size: 100mm (L) x 70mm (W) x 7mm /9.5mm (H), the same as traditional 2.5-inch HDDs, making it the most universal form factor. The interface is primarily SATA (with a few U.2 versions supporting NVMe), and it can directly replace an HDD without hardware modification. Typical use cases: consumer PCs, laptops, servers (non-high-density deployments), and large-capacity backup storage. Its compatibility is extremely strong, and it is one of the most mainstream form factors today.

The M.2 form factor is miniaturized, with common sizes 2230 (22mmx30mm), 2242 (22mmx43mm), 2260 (22mmx60mm), and 2280 (22mmx80mm), of which 2280 is the most mainstream. Interfaces are divided into STA (B key) and NVMe (M key), and the NVMe-based M.2 SSD (M key) delivers best performance, making it the preferred choice for enterprise servers and high-end PCs. Use cases include enterprise servers (high-density deployments), high-end PCs, and laptops (thin-and-light systems). Its small size saves chassis space and suits high-density deployments such as data center blade servers.

Size is the same as a 2.5-inch SSD (100mmx70mmx7mm), but the interface differs: it uses the U.2 interface (SFF-8639) and supports NVMe only. Core characteristics include strong performance (supporting PCIe 4.0/5.0) and high reliability (supporting hot swap and power-loss protection), making it suitable for enterprise core workloads. Typical uses include enterprise servers and core data-center storage such as databases and virtualization. Hot-swap makes maintenance easier, and power-loss protection safeguards data. It is the preferred form factor for high-performance enterprise SSDs.

mSATA SSDs are miniaturized and mainly used in older laptops and embedded devices, but they are gradually being replaced by M.2 SSDs. U.3 SSDs are the upgraded version of U.2, supporting PCIe5.0 and higher bandwidth, making them suitable for next-generation high-performance storage in data centers.

Combining the architecture, protocols, and FTL core discussed above, we can trace a complete “host writes data” workflow to connect all concepts and fully understand how SSDs work (the read flow is similar, just in reverse): the host initiates a wirte request.

The host (for example, a server) converts the file-system write request into a logical address (LBA) and sends it to the SSD controller through the front-end protocol (SATA/NVMe); the controller receives and parses the request. It parses the front-end protocol command, extracts the logical address (LBA) and data, and temporarily writes the data into cache (DRAM/HMB), decoupling speed; FTL address mapping.

FTL queries the mapping table and assigns a free phsical address (PBA, corresponding to a page in NAND flash) to the current logical address (LBA), while updating the mapping table; back-end control performs the wirte. The controller uses back-end control (multi-channel) to write the data from cache into the corresponding physical page in NAND flash (at this point the new data is written, while the page corresponding to the old data is marked invalid); FTL maintenance and optimization. If the current block has already undergone many erase cycles, FTL uses wear leveling to write subsequent data to blocks with fewer erase cycles.

When free space falls below a threshold, FTL auto matically triggers garbage collection to reclaim the space occupied by invalid pages; and the controller returns the result. The controller send a successful-write signal back to the host through the front-end protocol, and the write process is complete.

The operating principle of an SSD is fundamentally a collaboration between hardware and software. Its core is to use FTL to solve NAND flash’s physical limitations, use front-end protocols to communicate at high speed with the host, and use back-end control to perform parallel read/write operation on NAND flash. Although it seems complex, it can be broken down into three main parts: hardware (controller, NAND, cache), software (FTL, firmware), and protocols (front-end SATA/NVMe, back-end ONFI).